By Dr. Thomas P. Keenan, FCIPS, I.S.P., ITCP in collaboration with Ron Murch, I.S.P., ITCP – both with the University of Calgary.

In a session conducted by David Masson, Director of Enterprise Security, Darktrace and moderated by Martin Kyle, CISO of Payments Canada, a group of leading Canadian experts explored the globalization of computer security risks and what CISOs can do to manage it.

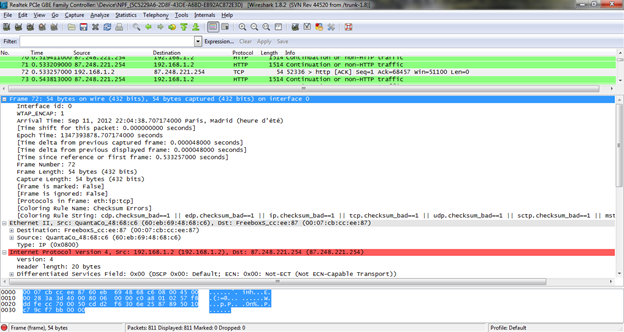

Classic movies about hackers usually showed a slightly chubby dude munching Doritos in his parents’ basement, desperately overdue for a shower. When they needed visuals, Hollywood’s go to image was often Wireshark, an open-source network protocol analyzer that has great graphics to impress the masses.

Image courtesy of Wikimedia Commons, CC-BY-SA 3.0

If they wanted to make a realistic movie about the state of hacking in 2020, the bad guys would be either criminal gangs or “patriotic hackers” and subtitles would probably be required since they’d be speaking a foreign language. Much of the action in the movie would take place inside a computer (like the 1982 classic “Tron”) and the whole thing would be over in a few microseconds.

MACHINE SPEED, AUTOMATED, MACHINE LEARNING, AI – WHAT’S THE DIFFERENCE?

David Masson noted that many cyberattacks “are quite sophisticated and they move faster than people can respond.” There’s an asymmetry when the attackers are using tools like Artificial Intelligence (AI) and the defenders are not. While acknowledging that terms like AI and Machine Learning are sometimes marketing jargon, he outlined a history of these topics and how interest has risen and ebbed.

In the 1970s, for example, if you were an AI researcher, it was hard to get funding because computers weren’t fast enough to do impressive things like processing human speech and answering unstructured questions. Now, of course, a $50 smart speaker can do that easily – using its own power and the cloud intelligence that’s behind it.

[If you’re interested in the history of AI and related disciplines, which is far broader than cybersecurity, the article http://sitn.hms.harvard.edu/flash/2017/history-artificial-intelligence/ and the links at the end of it will tell you what you need to know about these topics.]

LET’S GET SPECIFIC

Masson explained that “Phishing emails can be created by AI so that they are realistic – and more successful” and added that, “The only way to find an AI attack is to have AI looking for it.” Martin Kyle noted that, within the financial sector, there are sophisticated AI-based fraud detection engines which, for example “measure the speed at which a user navigates their account. If the speed is too fast for a human, the transaction gets flagged as potential fraud.”

He also noted that the challenge is to balance ease of use against security. “Some of the security is on the back end so the consumer doesn’t see it.”

In reply to a question about real-world automated attacks, Masson pointed to the 2016 Mirai attack which left much of the Internet inaccessible from the East Coast of the U.S. It was famous for using Internet of Things devices like security cameras and smart light bulbs to propagate a distributed denial of service attack. Even if it was discovered and de-activated on a device, Mirai would try to automatically re-infect it – and it was usually successful.

Although Mirai was feared to be a nation state attack, it turned out to be the work of Rutgers University student Paras Jha, who was looking for mischievous fun as well as ways to make money from the Internet. In 2018 he was sentenced to six months of home confinement and 2,500 hours of community service, and ordered to pay restitution of $8.6 million, which is what his university estimated it cost them to deal with his mischief.

The Mirai botnet source code was released into the wild so nation states might well pick it up and use all or parts of it in future attacks. Also, analysis shows it contains some strings written in Russian which just emphasizes that there is a global economy of malicious code exchange.

THE OODA LOOP

Kyle introduced a concept familiar to fighter pilots, which has them Observe/Orient/Decide/Act. He noted that “if a fighter pilot can do that faster than an adversary, they’ll likely win the fight.” The cybersecurity equivalent is to be able to spot and neutralize a threat, even if you’ve never seen it before. Masson believes that, contrary to popular lore that the attackers have the advantage, the defenders should actually be in a better position since they know their network, technology, infrastructure, policies, and procedures, while the intruder has to discover all this. “If we’ve got a real understanding we should spot their arrival even if it’s AI”. He said that there will always be “zero days” (new attacks that exploit a previously unknown vulnerability, and for which there is no detection signature or mitigation patch). Almost by definition, “you can’t spot zero days, but you can spot changes of your network”

A poll question “Have you ever had to face a zero day in your career?” was asked with the result: Yes 45%, No 55%

DEALING WITH ZERO DAY ATTACKS

The combination of automation (or AI) and a zero day is particularly worrisome, since it can go “galloping through your network”. Masson gave the example of the Maersk shipping company where people were running down the halls screaming “unplug your computers”. He also discussed the March 2020 global intrusion campaign called APT41 which targeted certain industries like Banking and Finance, Media, Oil & Gas, and, he said, law firms. The bad actors behind it “exploited the attack and used it as quickly as they could before anyone could respond with a signature to detect and defend.”

One CISO, from the energy sector, reported “We’ve seen a zero day. We were tracking down what we thought was an operational problem, thought it was a bad patch. We knew it was an inconsistency but not a cyber attack.” He estimated it was in the environment for six days before it was spotted. He then asked, “With automated response, is it important to have best of breed or would it be better to have a solid integrated platform?”

Masson replied that is a good question. “You’re better off taking a unified view than a siloed one, because attackers take a unified view. It is possible to have a self-aware approach”. He summarized his experience with automated attacks as follows: “You can only fight AI with AI. We’ve been using AI for over seven years. This is perhaps the first time in cybersecurity history where the defensive side (the defenders) has had the weapon (AI) before the attackers.”